1. Abstract

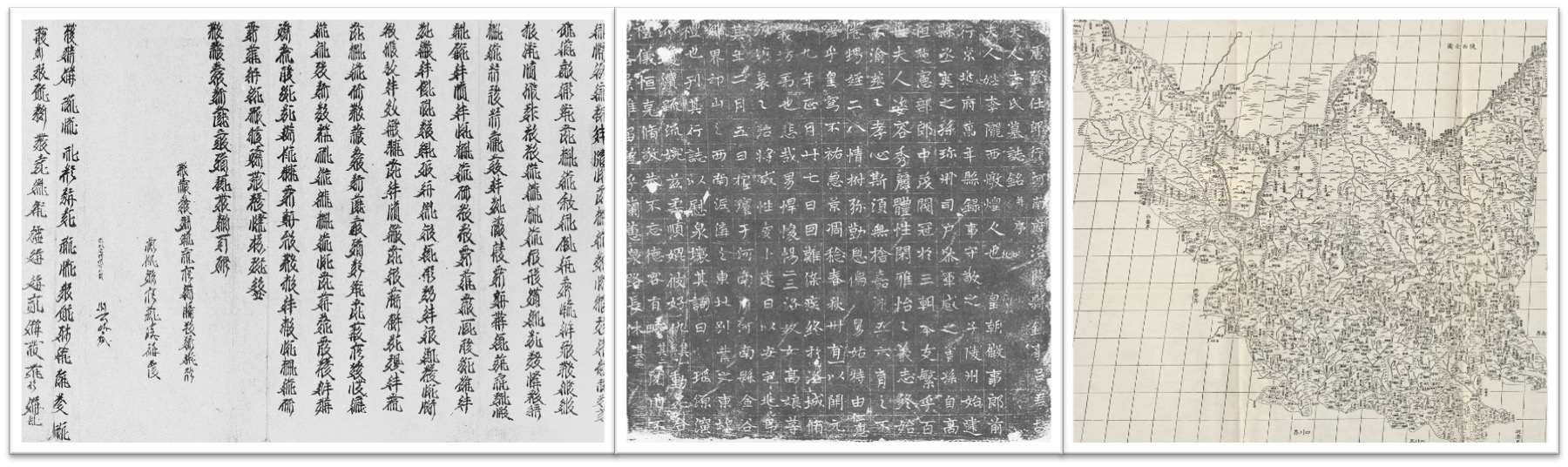

Deep learning methods have greatly improved the text recognition accuracy for most modern languages. However, the OCR for historical documents is challenging, especially for the handwritten or printed ones without a training dataset, in which each character has hundreds or thousands of labeled examples. We focus on the recognition of the following types of primary sources for studying Chinese history, including handwritten Tangut historical documents, stele texts written in ancient Chinese, and place names on Chinese historical maps, as shown in Figure 1.

Figure 1. Tangut Historical Documents, Steles with Ancient Chinese Characters and Historical Maps

Tangut, invented and used by the West Xia State in Chinese history, has distinguished for more than 1000 years. The main Tangut documents were found and evacuated from Khara-Khoto by P. K. Kozlov in the early 20th century, which are important primary sources complementing to the Chinese historical documents for studying that period of Chinese history. A large collection of steles with texts in ancient Chinese keeps important information of the time it was built, which needs to be digitized for history study. Historical maps are different from the first two types because they contain texts (place names) as well as images. The positions of place names on the map are random and the directions of the place names vary. Though the three tasks are different, we propose a unified workflow and framework for recognizing texts in these historical documents.

Our workflow has four phases: (1) text detection and segmentation, (2) character annotation, (3) model training, and (4) text line recognition. The core function of the workflow is deep convolutional neural networks (DCNNs). The multiply stacked convolutional layers are used in all the four phases of the workflow. They extract features that can be used in the character classification (phase 4) and text line detection (phase 1) as well as in the generation of supported characters for rare characters (phase 2). For the character classification and generation, a fully connected layer is added at the end of the stacked convolutional layers to output the predicted class for a given input image. For the text line detection, the fully connected layer is removed and a fully convolutional network (FCN) is attached to do pixel-level segmentation.

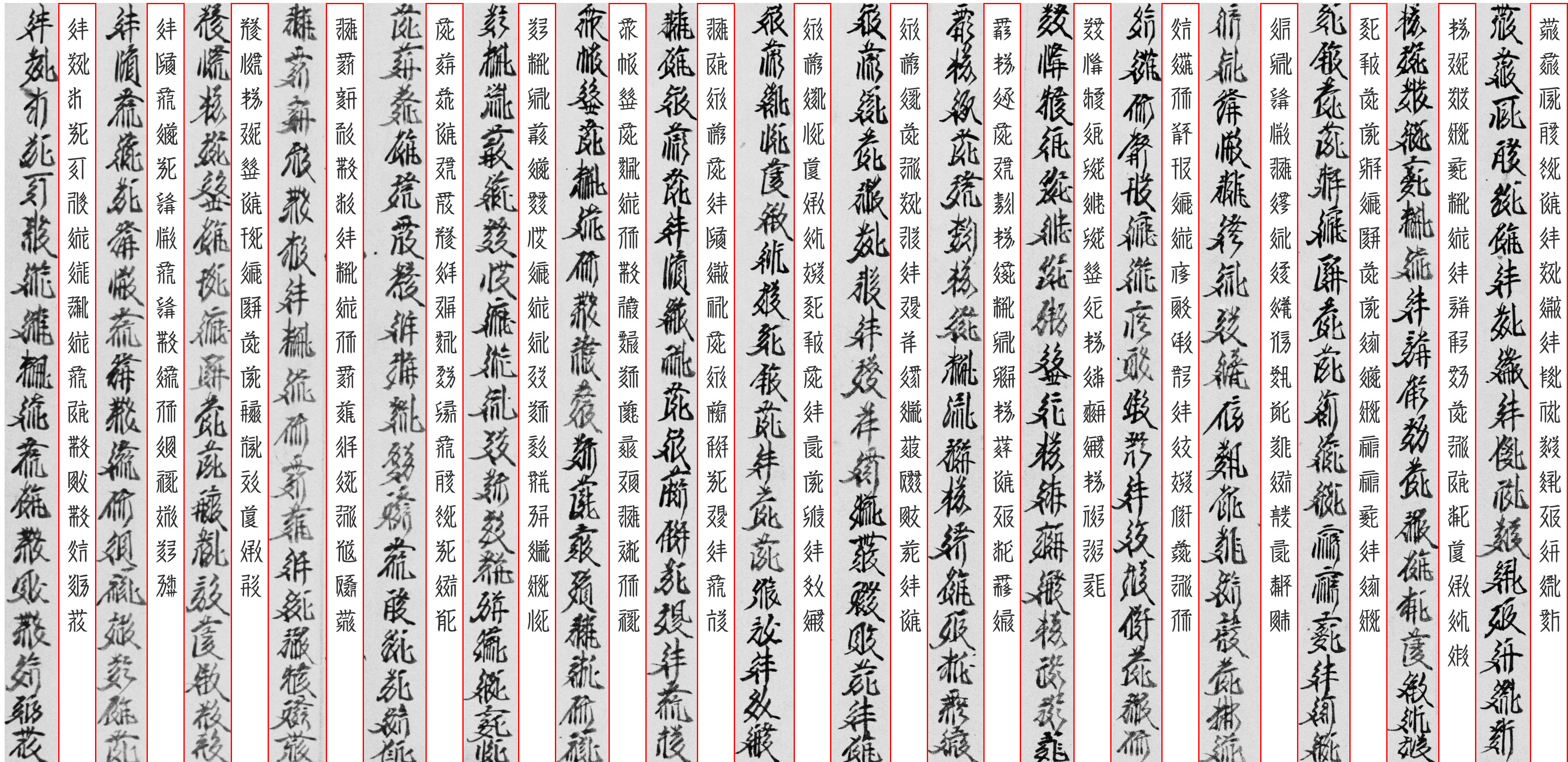

Text line detection and segmentation is a part of document layout analysis, where FCN [1]and U-Net[2][3] are usually used. We use a modified U-Net to detect text lines in the Chinese historical documents and then segment them from the document images. The characters in the lines are annotated manually when we had not enough labeled single characters at first. We have developed a method to generate support examples for representing rarely used characters, Target-Directed Mixup for labeling characters[4]. When most characters have enough examples, we can synthesize a large number of text lines using the labeled characters to form the training dataset for the text line recognition. We train a model for recognizing the segmented lines by combining a convolutional neural network and a recurrent neural network based on the synthesized text lines with CTC[5] as the loss function. For the historical maps, the detected regions with places names are segmented and restored into normal orientation, and then they are fed into the trained model for recognition. The recognized text lines are shown in Figure 2 as an example, where human experts could correct the results.

Figure 2. Segmentation and Recognition Result of a Page of Tangut Documents

The workflow has been successfully used in these three tasks. Although the framework is designed for recognizing ancient Chinese and Tangut characters, which are similar in appearance, it could be modified and applied in recognizing other documents without an existing training dataset.

Acknowledgment

The author would like to thank the reviewers. This work is supported by MOE (Ministry of Education in China) Project of Humanities and Social Sciences (Project No. 17YJCZH239).

References

[1] B. Barakat, A. Droby, M. Kassis, and J. El-Sana, “Text Line Segmentation for Challenging Handwritten Document Images using Fully Convolutional Network,” in 2018 16th International Conference on Frontiers in Handwriting Recognition (ICFHR), Niagara Falls, NY, USA, Aug. 2018, pp. 374–379.

[2] O. Ronneberger, P. Fischer, and T. Brox, “U-net: Convolutional networks for biomedical image segmentation,” in International Conference on Medical image computing and computer-assisted intervention, 2015, pp. 234–241.

[3] K. Ma, Z. Shu, X. Bai, J. Wang, and D. Samaras, “Docunet: document image unwarping via a stacked U-Net,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2018, pp. 4700–4709.

[4] G. Zhang and Y. Zhao, “Target-Directed MixUp for Labeling Tangut Characters,” in 2019 International Conference on Document Analysis and Recognition (ICDAR), Sep. 2019, pp. 202–207.

[5] B. Shi, X. Bai, and C. Yao, “An End-to-End Trainable Neural Network for Image-Based Sequence Recognition and Its Application to Scene Text Recognition,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 39, no. 11, pp. 2298–2304, Nov. 2017.